One of the key pieces of wisdom regarding any kind of development project is that all software problems are inherently hardwaret problems. This is one among many reasons why devices like the iPhone and the Xbox have been so popular over the years. When software and hardware integrate properly, and code is written to specs that take full advantage of the platform, great things become possible.

When it comes to web development, it always serves developers well to start with hardware when attempting to diagnose performance issues. While it is a virtually habitual response to believe something is wrong with the code, the reality is often far simpler and can have a much more significant impact. To get started, we should look at the universal performance inhibitor, and that is available memory.

RAM

While modern operating systems are ostensibly capable of memory management, the truth is some are inadequate when it comes to memory conservation, especially on systems with high process loads. By and large, the average web server shouldn’t require an outsized RAM installation, but if the software, and in particular the operating system and the web server, isn’t handling allocations and de-allocations fast enough, it can cause enormous bottlenecks. These process collisions leave the CPU idle for long periods of time and can cause tremendous network issues as well.

Database

On nearly any multi-tier application, the database is accessed multiple times per client request. The heaviest of these accesses are write operations, naturally, but if the software is written to perform unnecessary or redundant database lookups, or if it is not taking full advantage of the features of the database software, like transactions, rollbacks and so forth, the cumulative performance problems can become a problem, especially when combined with inadequate hardware specifications.

Compression

One of the features of all modern web servers and browsers is the ability to freely exchange compressed content. Even if a web application is server-heavy and process-heavy, the final delivery of content, especially if the assets are images, large-scale client-side code modules or complex CSS designs, should always take advantage of compression and “minified” files. This is particularly important for apps that are widely distributed or that expect high loads and many simultaneous users. The processing overhead of file compression is negligible compared to the bandwidth impact and potential latency issues caused by unnecessarily large assets.

Testing

One of the most important benefits of both automated and iteration testing is the ability to check compatibility across both client software and devices. With roughly half of all traffic now coming from mobile devices it is vital that any web site and in particular any application on a site be evaluated for its responsive features and its accessibility and functionality on various resolutions, browsers and operating systems.

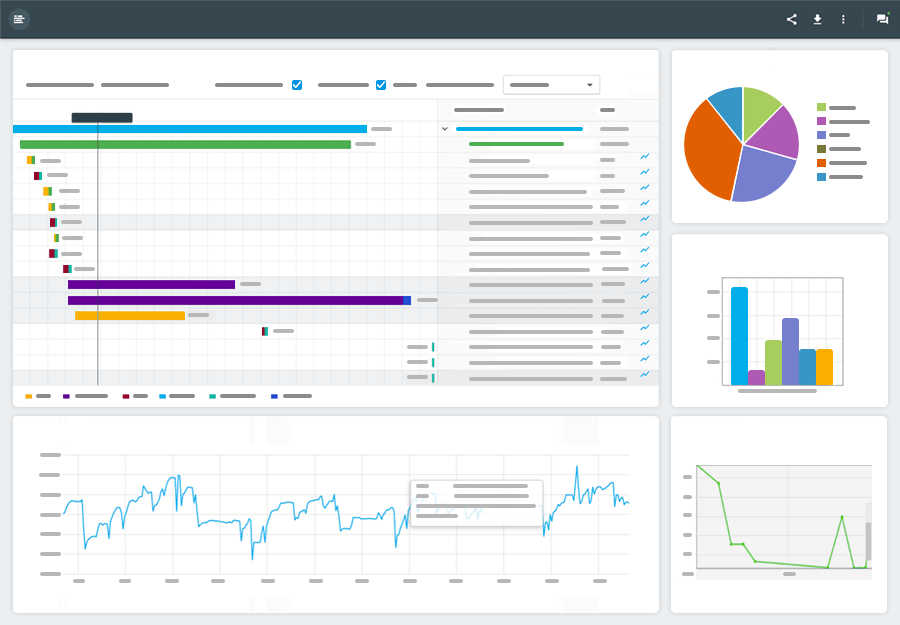

It is possible to automate the process of testing web applications, so evaluating the quality of an application is something that can be built in to any development cycle or workflow using standard tools and record-keeping.

Asynchronous Load

Modern web publishing often takes place on a screen that can only display one or two images and a paragraph of text at a time, at least if that text is meant to be readable. In those circumstances, it doesn’t make sense to load an entire site before rendering what should be visible above the fold. Rather, it is better to get what should be initially visible on the screen as fast as possible, and then load the other page assets as bandwidth becomes available through the process of asynchronous loading. This can present users with much more responsive applications while conserving bandwidth until it is needed.

Unlike binary desktop programs, web applications rely on a wide variety of hardware-limited resources to get from server to client. Because there are so many reasons an application can be slower than expected, it is rarely advisable to immediately look to the code for answers. Rather, all the logistical and practical problems should be solved first, then if issues remain, at least they aren’t likely to cause more confusion than necessary.